Jaap-Henk Hoepman’s Privacy Is Hard and Seven Other Myths: Achieving Privacy through Careful Design is a compact and attractively designed book that aims to do two things: scotch a handful of myths about privacy, and make a positive case for how digital technology can protect it. For the author, digital technologies appear like the reprogrammable T-800, able to pivots from menace to humanity in the first Terminator film to being its (not quite) saviour in the second.

The myths are plentiful, and Hoepman’s selection is as interesting as what does not make the final cut, such as the myth of the privacy paradox. Privacy has been commodified: the cost of a ‘data privacy solution’ starts from $50 a month for some cookie pop-ups to few hundred dollars a month if you want seamlessly to ‘gain user consent’. The fact that there is competition over who cares the most about your privacy would suggest we have come some way since Zuckerberg pronounced its death as a ‘social norm’, but the notion still has widespread currency. Another myth is that data are somehow abstract weightless, invisible and odourless elements stored in a non-physical cloud. Data means computing power which relies on extracted materials from the earth for building the hardware and generating the electricity, and human labour usually poorly remunerated early in the supply chain. Still another myth is that privacy violations are only committed by other people. This is the essential logic of the marketing message accompanying Google’s Sandbox, Facebook’s exclusion of third parties from its APIs, and Apple’s App Tracking Transparency. In none of these initiatives, as far as I can tell, were there any undertakings by these gigantic companies in question to reveal or improve their own data practices.

Meanwhile, Privacy is Hard hints at a number of underlying questions.

First world problems

First, whose privacy? Today’s digitalised society of course affects the rights to privacy and data protection, but it does so unevenly. This unevenness is a function of the vast and growing inequalities within and between societies. The Global South is unfortunately often absent from these discussions, which is inexcusable given the growing body of scholarship now available – Achille Mbembe or Nanjala Nyabola to cite just two – plus the empirical analyses contained in the UNCTAD Digital Economy Reports of recent years. They reveal populations of poorer countries being farmed for their data by Chinese and US tech companies in exchange for connectivity, electronic IDs and various other gadgets and services. Migrants are the objects of tracking and biometrics technology and will become more so our environment deteriorates and geopolitics become increasingly volatile in the coming decades. Children – according to a Human Rights Watch report – last week that almost all EdTech products risked or infringed on children’s rights. Amazon warehouse workers and Uber drivers, long been treated as a slaves to the algorithm, but as a result of the pandemic the market for workplace surveillance is now pumped on steroids. Workers have little choice but to acquiesce if they want to keep their jobs.

Yet privacy is alive and well and always will be for the powerful. Despite congressional majorities and state attorneys-generals efforts – still no one can get at Donald Trump’s tax returns. Mark Zuckerberg would not tell Congress what hotel he is staying in, although his social media empire is built on the collection of such trivial data concerning everyone else. Independent audit and transparency are always resisted by the powerful, with a plethora of tolls like NDAs, non-compete clauses and trade secrets wielded by smart lawyers ready to intimidate and exhaust any potential challengers, such that the mere threat of litigation is enough to chill most of them into silent.

Hoepman’s proposition about using tech to enhance privacy may well be valid for those of us who are familiar with VPNs, encrypted messaging apps etc. However, it is not fair to place the onus on everyone to be become so tech savvy. The very act of collecting and using data is an indication of power. Kate Crawford has been concerned specifically about AI, but her call to reason is equally apt for any processing of data, especially at scale, given the computing power, human resources and other administrative and legal apparatus that are required.

‘Datasets [in AI] are never raw materials to feed algorithms: they are inherently political interventions. The entire practice of harvesting data, categorizing and labelling, it, and then using it to train systems is a form of politics.’

Hoepman recognises the inherent risk in the assumption of a rational consumer in a reality of power imbalances. In the concentrated markets of the digital economy, where the dominant business model is tracking and profiling, how can there be genuine choice? If there is no choice, how can there be freely-given consent, the only valid form of consent under the GDPR? This is where the legal regimes of privacy and competition intersect, yet regulators still have not managed to converge on a solution.

Wizards of Oz

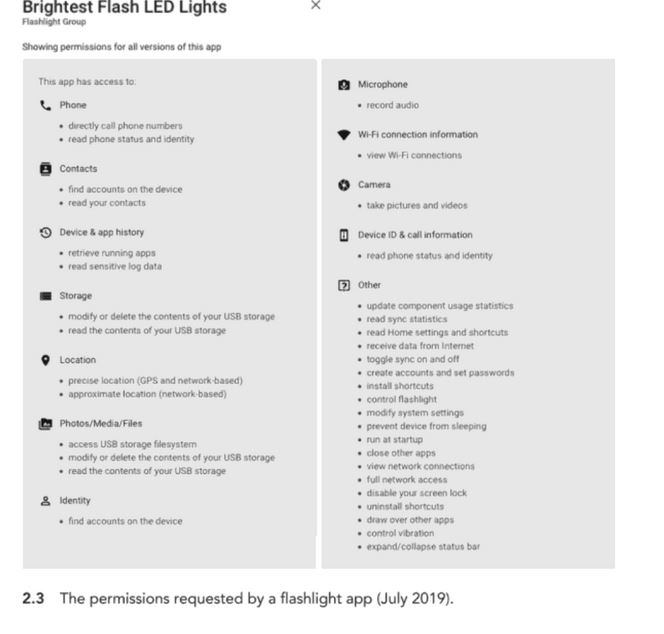

Second, what actually motivates all this rampant data harvesting? The book selects a random Android mobile app, the ‘Brightest Flash LED’, which hoovers up all manner of data utterly irrelevant to the functioning of a torch – location, address book and microphone recordings – as an example of the indispensable, privacy-invasive business models. What is not clear is why configure such an app to collect so much personal data in the first place. Typically these data get funnelled to Google and Facebook. Is their end goal really manipulating our brains to buy more stuff? (Or as the EU AI Act authors put it, to ‘deploy subliminal techniques beyond a person’s consciousness in order to materially distort a person’s behaviour in a manner that causes or is likely to cause that person or another person physical or psychological harm’.) Privacy is Hard cites Carole Cadwalladr’s contention that the abuses of Cambridge Analytica tipped the 2016 referendum towards Brexit and, of course, I would like this to be true. Unfortunately, this is an easy answer to a complex question, which runs the risk of doing the free marketing of the surveillance capitalists.

Hoepman asserts early in the book that Google, Facebook “and the like … know everything we share on their platform…” Do they really, or have they just managed to get advertisers to believe them? The author later reflects that thinking about surveillance is not enough and that ‘perhaps we need to shift our attention and focus on the underlying capitalist premise instead’ (including a reference to Morosov’s stunning dissection of Zuboff’s Surveillance Capitalism), but here the thought is left tantalisingly hanging in the air. (In fact, there is no need to assume this is a typically capitalist malaise; a digitalised feudal system would be driven by the same dynamics of power.) He also recognises that Google and Facebook are basically advertising companies. Facebook monopolises (for now) the inventory for social media eyeballs with its various other tracking techniques so ubiquitous so you cannot escape them whether or not you are a subscriber to one of their services. Google has by far the biggest search engine and is powerful on both the demand and supply side of the adtech ecosystem. These companies certainly, therefore, want your data. The question, left unexplored, is whether they are monetising the actual data or rather the belief in their claim that their data gives them unrivalled powers of predicting future behaviour on the basis of past behaviour. Such claims cannot be verified because there is no transparency about what the companies really do with the data. We all see ‘personalised ads’ for the same things we have only just purchased. Now internal documents obtained by Motherboard suggest that Facebook themselves do not know what data they have or what happens to them. Most data is just not used at all; most companies do not have the imagination or computing power to get value from it. Surveillance capitalism might more accurately be described as industrial-scale data hoarding: hoarding that requires the burning of fossil fuels. In such a scenario, the Emperor has no clothes, and it is all one massive scam.

The simulacra of privacy

Furthermore, for all the discussion of using tech to hide your data, it would be a shame to reduce all digital relationships to zero trust by default. This would not reflect how we are in real life. Hoepman indeed cites Helen Nissenbaum’s work on the contextual nature of privacy. If you are in a relationship of trust and respect then you have few qualms about exposing yourself; otherwise, there are gradations in the privacy human dignity demands. These norms have been unsettled by the growing interpolation of technology, and the relatively few entities in control of that technology, into social and economic life, such that you have no choice but to expose yourself to those entities, even though you do not trust them. Can any systems redesign be expected address this? I am not sure. Hoepman concludes that privacy could be restored if we could ‘redo the plumbing’. Perhaps we should focus on repairing socio-economic ties and diminishing the depredations of gigantic faceless intermediaries.

And yet, the Privacy is Hard is timelier than its author could have anticipated. It treats privacy as more or less coterminous with the processing of personal data, which is not a problem for most people except the most unreconstructed European legal geek. But here are infringements of privacy that make data protection violations look trivial. In the US, there are now well-founded fears of a real-life spillover when the Supreme Court as expected overturns Roe v Wade, which rests on a putative but contested right to privacy. Red States are lining up to criminalise not just abortion, but also contraception and abortion support. So all of the personal data harvested by platforms, apps and websites will become susceptible to disclosure to law enforcement, including data concerning women worried about what to do about pregnancy or fertility.

The market for privacy as commodity may have come to resemble Baudrillard’s simulacrum, a representation that bears ‘no relation to any reality whatsoever’. But the privacy-protecting tech solutions Hoepman promotes, like encryption and for minimising data retention, could well suddenly become essential rather than nice-to-haves. Market-driven and voluntary privacy-by-design could become the only safeguard in the United States against the intrusion of the state into a person’s most intimate sphere – if only everyone were able to avail themselves of it .

Leave a comment